This is a general warning about using the CHGCMDDFT command to change the default value of command parameters for commands in QSYS.

Why Not?

There are a number of reasons not to change parameter defaults on commands in QSYS…

- Any time you upgrade your systems, those default parameter changes will be lost because the commands are completely replaced.

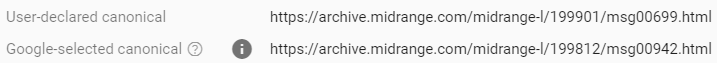

- Although you can identify what commands have had parameter defaults changed, there is no indication of WHAT parameter defaults were changed on a command. To identify what commands have had defaults changed display the command objects description (DSPOBJD) with DETAIL(*SERVICE). If an object has had the parameter defaults changed, the APAR ID will show ‘CHGDFT’.

- Third party products may be expecting commands to have the IBM provided default values. Because 3rd party products usually have to work on a number of IBM i versions, it’s impossible for vendors to specify a specific value for every parameter. New parameters are added with almost every release.

A Better Approach

A better approach would be to create a library to hold copies of the commands you want to modify …

- Create a specific library to hold customized commands

- Add that library to the QSYSLIBL system value above QSYS

- Duplicate the *CMD objects into that library

- Change the default parameter values on the commands in that library.

This way the commands in QSYS are left with the IBM provided default parameter values. Since the custom command library is above QSYS in the system library list, applications that reference those command (that don’t qualify the command to QSYS), will use the modified command.

I like to create a simple CL program that does the work of deleting existing commands from the custom command library, duplicate the command from QSYS, and modifies the command parameter defaults. Not only does this make it easy to recreate the custom command parameter defaults when you do an OS upgrade, it documents what parameter defaults have been made.

CRTPRXCMD

You may be tempted to use the CRTPRXCMD to create a ‘proxy command’, that points to the original command, and change the defaults on the proxy command.

DO NOT DO THAT!

A proxy command isn’t a stand alone object that is independent of the actual command. It’s just a pointer to the real command.

Any real changes you make to a proxy command will actually be made on the real command.

Example

Here’s a very simple example of a CL that will repopulate a custom command library with modified default parameter values.

PGM

DCL VAR(&LIB) TYPE(*CHAR) LEN(10) VALUE('CUSTCMD')

DCL VAR(&CMD) TYPE(*CHAR) LEN(10)

/* SAVLIB must default to *PRV target release for compatiblity */

CHGVAR VAR(&CMD) VALUE('SAVLIB')

CALLSUBR SUBR(DUPCMD)

CHGCMDDFT CMD(&LIB/&CMD) NEWDFT('TGTRLS(*PRV)')

RETURN

SUBR SUBR(DUPCMD)

DLTCMD CMD(&LIB/&CMD)

MONMSG MSGID(CPF2105)

CRTDUPOBJ OBJ(&CMD) FROMLIB(QSYS) OBJTYPE(*CMD) TOLIB(&LIB)

ENDSUBR

ENDPGM